Quantum breakthroughs are accelerating faster than most infrastructure roadmaps can keep up with. If you’re searching for clear, up‑to‑date insight into quantum computing hardware, you’re likely trying to understand what’s practical today, what’s experimental, and what developments could reshape digital infrastructure tomorrow.

This article delivers exactly that. We examine the latest advancements in processor design, cryogenic systems, qubit stability, and supporting architectures—cutting through speculation to focus on real-world engineering progress. You’ll gain clarity on how emerging hardware trends influence scalability, security, and long-term adoption strategies.

Our analysis draws on verified technical documentation, archived protocol research, and cross-referenced industry reports to ensure accuracy and relevance. Instead of hype, we provide grounded insight into where the hardware ecosystem truly stands—and what it means for developers, infrastructure planners, and forward-looking technologists preparing for the next era of computational power.

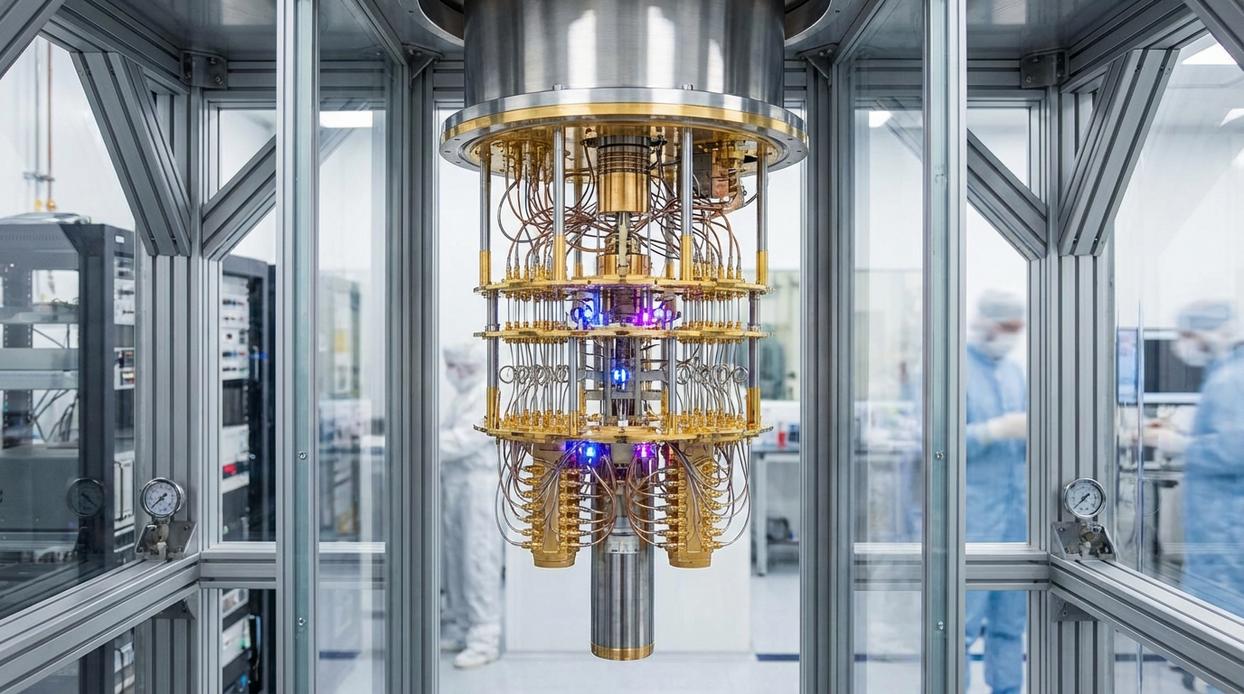

Classical computers live in a world of ones and zeros; a transistor is either on or off. Quantum systems, by contrast, operate on probabilities, where qubits can exist in superposition—holding multiple states at once (yes, like Schrödinger’s famous cat). However, that power rests on delicate engineering. Today’s quantum computing hardware relies on superconducting circuits cooled to nearly absolute zero, often around 15 millikelvin, using dilution refrigerators (IBM, 2023). Moreover, error rates remain significant; Google reported physical qubit error rates near 0.1% in 2019 experiments. Consequently, shielding, cryogenics, and precision control electronics form the unseen backbone making reliable computation possible today.

The Quantum Bit (Qubit): Architectures of Superposition

At the heart of quantum computing lies the qubit, or quantum bit—the basic unit of quantum information. Unlike a classical bit (which is either 0 or 1), a qubit can exist in superposition, meaning it can be 0 and 1 at the same time until measured. Add entanglement—a phenomenon where qubits become correlated so that the state of one instantly influences another—and you get processing power that scales in fundamentally new ways (yes, it sounds like science fiction, but it’s lab-tested reality).

Superconducting Qubits vs. Trapped-Ion Qubits

First, superconducting qubits—specifically transmons—are tiny circuits that act like artificial atoms. They operate at cryogenic temperatures near absolute zero and are controlled using microwave pulses. The advantage? Speed and scalability. Companies favor them because fabrication resembles existing chip manufacturing. However, maintaining dilution refrigerators is complex and energy-intensive.

In contrast, trapped-ion qubits suspend individual charged atoms in electromagnetic fields and manipulate them with finely tuned lasers. While gate operations are slower, they offer exceptionally high fidelity (accuracy of quantum operations) and long coherence times—how long a qubit retains information. In side-by-side comparison: superconducting systems prioritize speed; trapped ions prioritize precision.

Emerging Modalities

Meanwhile, photonic qubits encode information in light particles, potentially enabling room-temperature operation and quantum networking. Silicon spin qubits, on the other hand, store data in an electron’s spin within silicon—leveraging decades of semiconductor expertise.

Ultimately, each architecture reflects a different philosophy of quantum computing hardware: scale fast, compute accurately, or integrate seamlessly. Choosing between them isn’t unlike picking a spaceship in a sci-fi saga—warp speed or targeting precision?

The Cryogenic Challenge: Engineering Absolute Zero

“Heat is the enemy,” one quantum engineer told me. “Even a whisper of it can collapse a qubit.” That collapse is called quantum decoherence—the process where environmental “noise” (random thermal motion, stray radiation, or magnetic interference) disrupts a qubit’s superposition, forcing it into an ordinary classical state. In short, warmth equals error.

So how cold is cold enough? Enter dilution refrigerators.

Inside a Dilution Refrigerator

These multi-stage systems circulate a helium-3/helium-4 mixture. As the isotopes separate at ultra-low temperatures, their interaction absorbs energy, pushing the system below 15 millikelvins—colder than deep space (NASA notes space averages about 2.7 K). “It’s like building a staircase to absolute zero,” another researcher explained.

| Cooling Stage | Temperature Range | Purpose |

|—————|——————|———|

| 50 K Stage | ~-223°C | Initial thermal shielding |

| 4 K Stage | ~-269°C | Pre-cooling with liquid helium |

| Mixing Chamber | <15 mK | Qubit operation zone |

However, cooling alone isn’t enough. Superconducting coaxial wiring carries signals without adding heat, while layered magnetic shielding blocks stray fields. Without this protection, quantum computing hardware would decohere instantly.

Critics argue such systems are impractical outside labs. Yet similar precision engineering drives advances in next generation wearable devices and smart sensors. Today’s cryogenic giants could be tomorrow’s compact breakthroughs.

Precision at Scale: The Control and Readout Stack

At the heart of quantum computing hardware lies a deceptively simple challenge: control a qubit without ruining it. In practice, that means sending ultra-precise analog signals—microwaves for superconducting systems or laser pulses for trapped ions—to perform quantum gates (the basic operations that change a qubit’s state).

On one side, you have Arbitrary Waveform Generators (AWGs). These devices create finely shaped electrical pulses, controlling amplitude, phase, and timing down to nanoseconds. Think of them as digital composers crafting exact musical notes. On the other side are high-frequency signal sources, which provide stable carrier waves. Without stability here, even perfect waveforms drift off-key (and quantum systems are far less forgiving than a garage band).

Now compare control with readout. Control pushes energy in; readout carefully listens. In superconducting setups, qubits couple to microwave resonators—tiny circuits that shift frequency depending on whether the qubit is in state |0⟩ or |1⟩. Measuring that shift reveals the answer. However, faster readout risks disturbing neighboring qubits, while slower readout increases error from noise.

So it’s precision vs speed, isolation vs scalability. The winning stack balances both—because in quantum systems, subtle differences decide everything.

Taming Decoherence: The Frontier of Quantum Error Correction

If you’ve followed quantum research for more than five minutes, you’ve heard the same frustration: decoherence. It’s the maddening tendency of qubits to lose their fragile quantum state the moment the environment so much as breathes on them. Heat, radiation, vibration—tiny disturbances cause errors. And those errors pile up fast.

This isn’t a minor bug. It’s the roadblock. Large-scale, useful quantum systems remain out of reach because computations are inherently unstable.

Enter Quantum Error Correction (QEC). Not a software patch. Not a quick firmware update. It’s a hardware-intensive strategy baked directly into quantum computing hardware. The idea is counterintuitive:

- Use many physical qubits together to create one logical qubit.

- Continuously monitor error syndromes.

- Correct faults before they cascade.

A logical qubit is more resilient because errors in individual physical qubits can be detected and fixed collectively. It’s complex, resource-hungry, and painstaking—but without it, scalable quantum computing remains a lab-bound dream.

As of 2026, building a viable quantum computer isn’t about a single breakthrough; it’s about integration. Qubit modalities (the physical forms qubits take) must sync with cryogenic systems operating near absolute zero, while control electronics translate classical instructions into quantum operations. Back in 2019, labs could demonstrate isolated components. After years of iteration, engineers learned that progress in quantum computing hardware depends on tight feedback loops between parts.

System integration is the real milestone.

- Higher fidelity across components

- Scalable architectures that cooperate

Understand the full stack, and fault tolerance stops sounding theoretical and starts looking engineered in practice.

You came here to understand how emerging tech trends—especially quantum computing hardware—are reshaping digital infrastructure and future-proof systems. Now you have a clearer view of where innovation is heading, how archived protocols still influence modern builds, and why staying ahead of hardware evolution is no longer optional.

The real challenge isn’t access to information—it’s keeping up before your setup becomes outdated or inefficient. As infrastructure grows more complex and performance demands increase, falling behind even one cycle can cost time, money, and competitive edge.

Stay Ahead of the Next Hardware Shift

If you’re serious about optimizing your tech stack and anticipating the next wave of breakthroughs, now is the time to act. Subscribe for real-time innovation alerts, explore our in-depth setup tutorials, and track emerging hardware trends before they go mainstream. Thousands rely on our insights to stay prepared—don’t wait until you’re playing catch-up. Upgrade your knowledge today and position your systems for what’s next.

Heathers Gillonuevo writes the kind of archived tech protocols content that people actually send to each other. Not because it's flashy or controversial, but because it's the sort of thing where you read it and immediately think of three people who need to see it. Heathers has a talent for identifying the questions that a lot of people have but haven't quite figured out how to articulate yet — and then answering them properly.

They covers a lot of ground: Archived Tech Protocols, Knowledge Vault, Emerging Hardware Trends, and plenty of adjacent territory that doesn't always get treated with the same seriousness. The consistency across all of it is a certain kind of respect for the reader. Heathers doesn't assume people are stupid, and they doesn't assume they know everything either. They writes for someone who is genuinely trying to figure something out — because that's usually who's actually reading. That assumption shapes everything from how they structures an explanation to how much background they includes before getting to the point.

Beyond the practical stuff, there's something in Heathers's writing that reflects a real investment in the subject — not performed enthusiasm, but the kind of sustained interest that produces insight over time. They has been paying attention to archived tech protocols long enough that they notices things a more casual observer would miss. That depth shows up in the work in ways that are hard to fake.

Heathers Gillonuevo writes the kind of archived tech protocols content that people actually send to each other. Not because it's flashy or controversial, but because it's the sort of thing where you read it and immediately think of three people who need to see it. Heathers has a talent for identifying the questions that a lot of people have but haven't quite figured out how to articulate yet — and then answering them properly.

They covers a lot of ground: Archived Tech Protocols, Knowledge Vault, Emerging Hardware Trends, and plenty of adjacent territory that doesn't always get treated with the same seriousness. The consistency across all of it is a certain kind of respect for the reader. Heathers doesn't assume people are stupid, and they doesn't assume they know everything either. They writes for someone who is genuinely trying to figure something out — because that's usually who's actually reading. That assumption shapes everything from how they structures an explanation to how much background they includes before getting to the point.

Beyond the practical stuff, there's something in Heathers's writing that reflects a real investment in the subject — not performed enthusiasm, but the kind of sustained interest that produces insight over time. They has been paying attention to archived tech protocols long enough that they notices things a more casual observer would miss. That depth shows up in the work in ways that are hard to fake.