If you’re searching for a clear explanation of http evolution, you’re likely trying to understand how the web transformed from simple document sharing to the fast, secure, real-time infrastructure we rely on today. This article breaks down that progression in a practical, easy-to-follow way—covering the key protocol upgrades, performance improvements, and security enhancements that shaped modern internet architecture.

Instead of repeating surface-level summaries, we examine archived technical documentation, infrastructure benchmarks, and implementation case studies to explain what actually changed between early HTTP versions and today’s standards. You’ll see how each stage of http evolution solved specific limitations—latency, connection overhead, encryption gaps—and what those changes mean for developers, IT teams, and digital system builders.

Whether you’re optimizing a setup, studying network protocols, or simply trying to understand why modern websites perform the way they do, this guide delivers the technical clarity and context you’re looking for.

The Genesis: HTTP/0.9 and 1.0 – Laying the Foundation

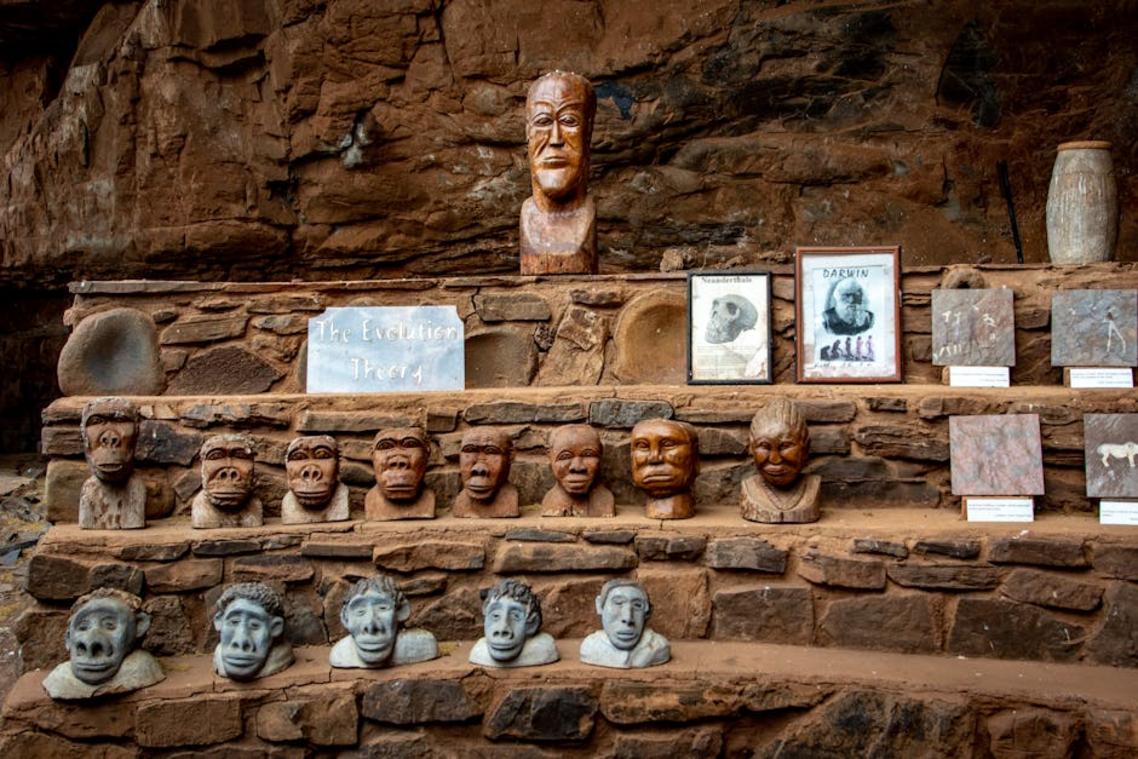

I still remember spinning up a tiny local server just to see how early web pages loaded (yes, it felt like digital archaeology). Back then, learning about HTTP/0.9 was like discovering a stone tool from the dawn of the internet.

HTTP/0.9 was the one-liner protocol. One method—GET. No headers (metadata sent with a request or response). No status codes (numeric messages like 404 Not Found that explain what happened). Its entire job was simple: fetch an HTML file. That was it.

However, as websites began adding images, audio clips, and eventually video, the cracks showed. Without a Content-Type header (which tells the browser what kind of file is being sent), browsers were guessing. And guessing is fine for trivia night, not for infrastructure.

Then came HTTP/1.0—a major leap forward. Suddenly, we had version numbers, status codes, headers, and new methods like POST (used to send data to a server) and HEAD (which retrieves metadata only). It felt like the web had grown up overnight.

But there was a catch. Each request required a separate connection. One file, one connection. As pages grew more complex, latency piled up. That bottleneck pushed the next phase of http evolution into motion.

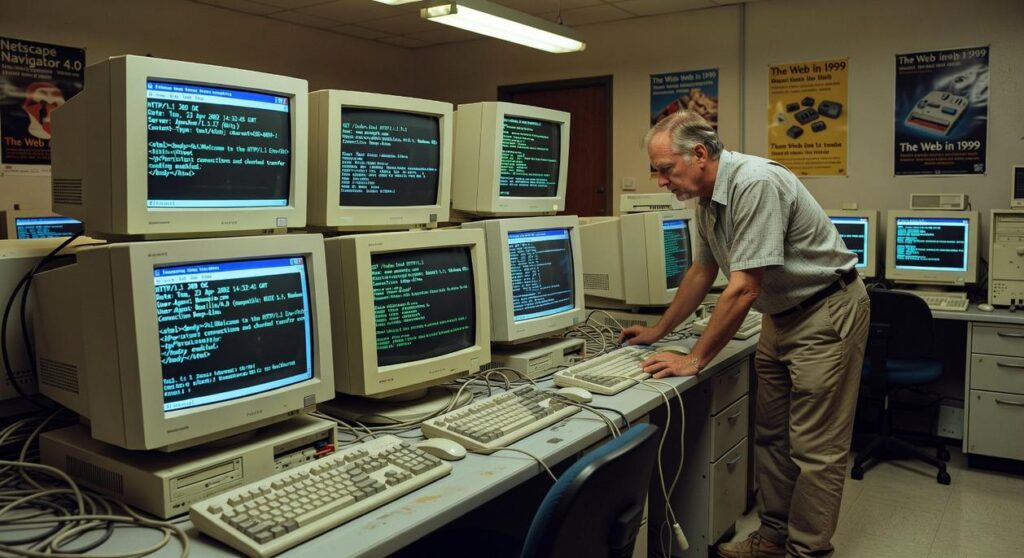

The Workhorse Era: HTTP/1.1 – The Protocol That Ruled for Decades

HTTP/1.1 didn’t just tweak the web—it gave it endurance. Its landmark feature was persistent connections, often called Keep-Alive. Instead of opening a new TCP connection (Transmission Control Protocol, the system that ensures reliable data delivery) for every single file, browsers could reuse one connection for multiple requests. Fewer handshakes meant lower latency—the delay before data starts transferring. In the dial-up days, that difference felt like upgrading from a bicycle to a motorbike (cue the Windows XP startup sound).

Then came pipelining. In theory, browsers could send multiple requests without waiting for each response. It sounded brilliant—like lining up coffee orders before the barista finishes the first. However, a single delayed response could stall everything behind it, a problem known as Head-of-Line (HOL) blocking. Critics argue pipelining was overhyped because inconsistent server support made it unreliable. Fair point. Still, it laid groundwork for future protocol redesigns in the broader http evolution.

Another quiet revolution was the Host header. By specifying which domain a client wanted, multiple websites could share one IP address. This enabled virtual hosting—the backbone of modern shared hosting services.

HTTP/1.1 also refined caching with ETag (a unique version identifier for resources) and Cache-Control directives. These tools reduced redundant downloads and server strain.

| Feature | Problem Solved | Impact |

|———-|—————-|——–|

| Keep-Alive | Repeated TCP handshakes | Lower latency |

| Pipelining | Sequential request delays | Faster in theory |

| Host Header | One site per IP limit | Enabled virtual hosting |

| ETag/Cache-Control | Unnecessary reloads | Better caching |

For a broader historical arc, see the rise and fall of obsolete data transfer standards.

The Need for Speed: HTTP/2 – Rebuilding for Performance

HTTP/2 wasn’t just a tune-up. It was a REBUILD. And honestly, it had to be.

From Text to Binary

HTTP/1.1 was human-readable text. That sounds charming, but browsers aren’t English majors. Parsing text-based headers line by line created inefficiencies and subtle errors. HTTP/2 switched to a binary protocol—meaning data is framed in compact binary code instead of plain text. Binary framing is faster for machines to interpret and far less ambiguous (computers prefer precision over poetry). The result? Fewer parsing mistakes and measurable performance gains, especially under load.

Some argue readability mattered for debugging. Fair. But modern developer tools make raw protocol inspection unnecessary. PERFORMANCE beats nostalgia every time.

Introducing Multiplexing

Here’s the real breakthrough: multiplexing. HTTP/2 allows multiple request and response streams to travel simultaneously over a single TCP connection. In HTTP/1.1, requests queued up like cars at a single-lane drive‑thru—classic Head‑of‑Line (HOL) blocking. Multiplexing opens all the lanes at once. Problem solved at the HTTP layer.

If http evolution were a movie franchise, this would be the sequel where the hero finally learns to fly.

- Multiple streams share one connection

- No more application-level HOL blocking

Server Push

Server Push lets servers proactively send assets (like CSS or JavaScript) before the browser explicitly requests them. It anticipates needs to speed up rendering. Critics say it can waste bandwidth if predictions are wrong. True—but when implemented carefully, it FEELS instant.

Header Compression (HPACK)

Headers often repeat across requests. HPACK compresses this redundancy, reducing overhead and cutting latency—especially on mobile networks where every kilobyte counts (and yes, users notice). PRO TIP: optimize headers before blaming your CDN.

Even after HTTP/2 solved application-level Head-of-Line blocking, TCP still held the leash. When a single packet was lost, every multiplexed stream stalled, waiting for retransmission. That bottleneck pushed the next leap in http evolution: QUIC (Quick UDP Internet Connections), built on UDP instead of TCP.

Unlike TCP, QUIC creates truly independent streams. If one packet drops, only that stream pauses; the others continue uninterrupted. Moreover, QUIC enables 0-RTT handshakes, cutting connection setup time, and maintains sessions across network switches, like Wi-Fi to cellular. In short, it fixes latency at the transport layer itself. A quiet but decisive architectural shift forward.

Stay Ahead of the Curve

You came here to understand how digital infrastructure, emerging hardware trends, archived tech protocols, and http evolution are shaping the modern tech landscape. Now you have the clarity to see how these moving pieces connect—and why they matter for your setup, strategy, and long-term scalability.

Technology doesn’t slow down. Protocols change. Hardware becomes obsolete. Standards evolve. And if you’re not paying attention, your systems fall behind—costing you performance, security, and opportunity.

The good news? You don’t have to guess your way forward.

Stay proactive. Monitor innovation alerts. Revisit archived tech protocols. Upgrade strategically instead of reactively. Most importantly, make sure your infrastructure evolves alongside http evolution and emerging standards—not after they’ve already disrupted your workflow.

If you’re serious about staying ahead, now is the time to act. Tap into trusted tech insights, follow emerging hardware shifts, and implement proven setup strategies used by forward-thinking professionals. Don’t wait for outdated systems to create expensive problems.

Upgrade smarter. Adapt faster. Stay future-ready.

There is a specific skill involved in explaining something clearly — one that is completely separate from actually knowing the subject. Jelvith Rothwyn has both. They has spent years working with digital infrastructure insights in a hands-on capacity, and an equal amount of time figuring out how to translate that experience into writing that people with different backgrounds can actually absorb and use.

Jelvith tends to approach complex subjects — Digital Infrastructure Insights, Tech Setup Tutorials, Knowledge Vault being good examples — by starting with what the reader already knows, then building outward from there rather than dropping them in the deep end. It sounds like a small thing. In practice it makes a significant difference in whether someone finishes the article or abandons it halfway through. They is also good at knowing when to stop — a surprisingly underrated skill. Some writers bury useful information under so many caveats and qualifications that the point disappears. Jelvith knows where the point is and gets there without too many detours.

The practical effect of all this is that people who read Jelvith's work tend to come away actually capable of doing something with it. Not just vaguely informed — actually capable. For a writer working in digital infrastructure insights, that is probably the best possible outcome, and it's the standard Jelvith holds they's own work to.

There is a specific skill involved in explaining something clearly — one that is completely separate from actually knowing the subject. Jelvith Rothwyn has both. They has spent years working with digital infrastructure insights in a hands-on capacity, and an equal amount of time figuring out how to translate that experience into writing that people with different backgrounds can actually absorb and use.

Jelvith tends to approach complex subjects — Digital Infrastructure Insights, Tech Setup Tutorials, Knowledge Vault being good examples — by starting with what the reader already knows, then building outward from there rather than dropping them in the deep end. It sounds like a small thing. In practice it makes a significant difference in whether someone finishes the article or abandons it halfway through. They is also good at knowing when to stop — a surprisingly underrated skill. Some writers bury useful information under so many caveats and qualifications that the point disappears. Jelvith knows where the point is and gets there without too many detours.

The practical effect of all this is that people who read Jelvith's work tend to come away actually capable of doing something with it. Not just vaguely informed — actually capable. For a writer working in digital infrastructure insights, that is probably the best possible outcome, and it's the standard Jelvith holds they's own work to.